PDFs are everywhere in the world of business. In many ways they’re great: they can be viewed on virtually any device, without compromising the formatting of the original document, they can support interactive elements, like forms or e-signatures, and can be easily compressed to smaller file sizes without compromising the quality of the content.

However, PDFs can also be a real headache for businesses that are looking to unlock the full potential of their data. They are a notoriously difficult format from which to retrieve information, often requiring time-consuming and error-prone manual extraction methods.

These methods can carry significant costs, divert time and effort away from strategic or more revenue-generating activities, and aren’t scalable without increasing headcount.

Below you’ll learn:

- Why extracting useable, machine-readable data from PDFs is such a challenge

- How others have attempted to solve the PDF data extraction challenge

- How Raw Knowledge has simplified this challenge with our Managed Smart Data platform

Why are PDFs such a challenge?

To understand why PDFs remain such a challenge for machines to read, it is helpful to understand why the format was created in the first place.

In the early days of electronic file sharing, people struggled massively with their documents looking very different from one computer to another. What would look great on one person’s Windows desktop could be completely unreadable on a Mac and vice versa. This was because different organisations would have their own systems, formats and applications, making cross-platform compatibility a nightmare.

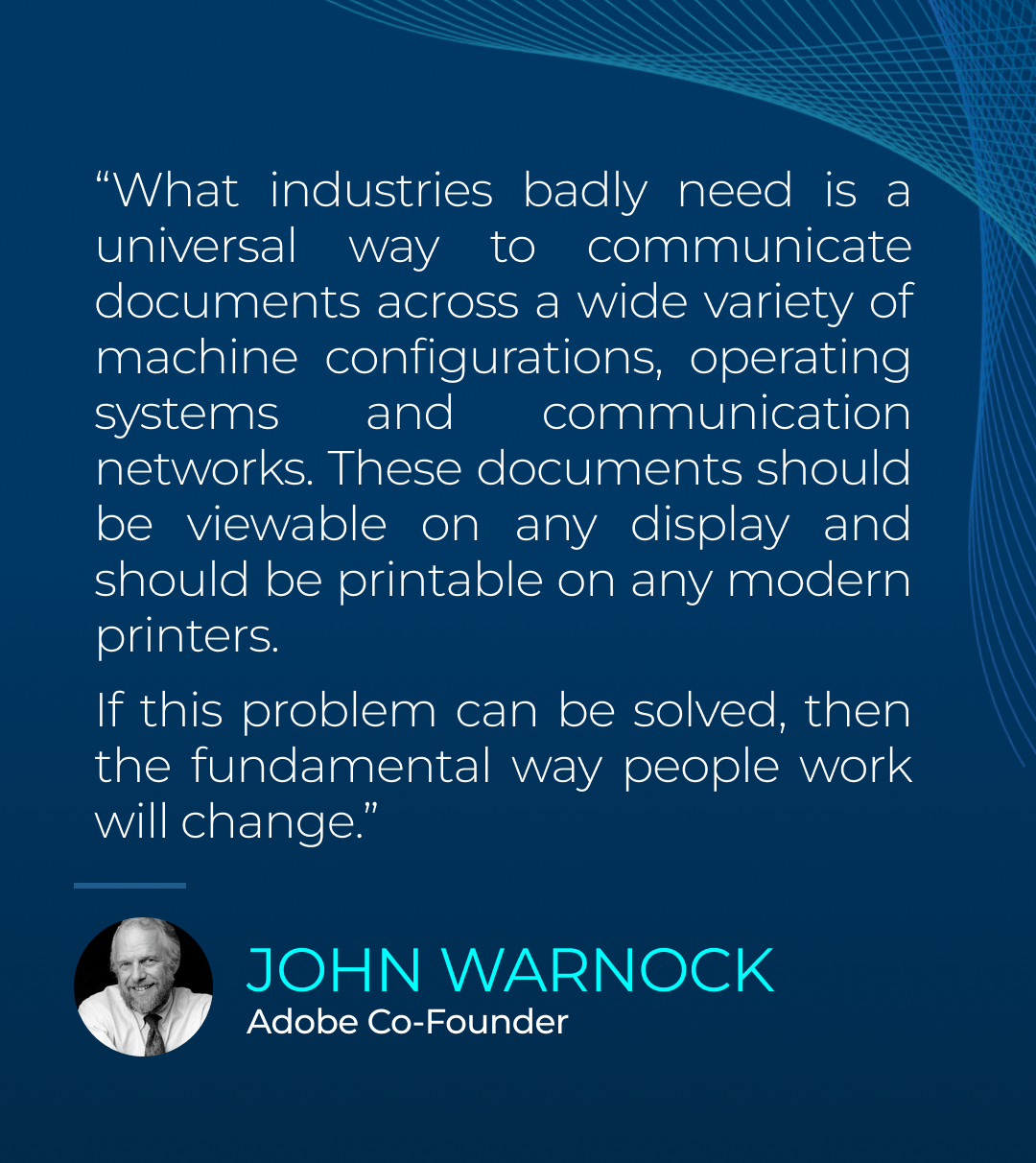

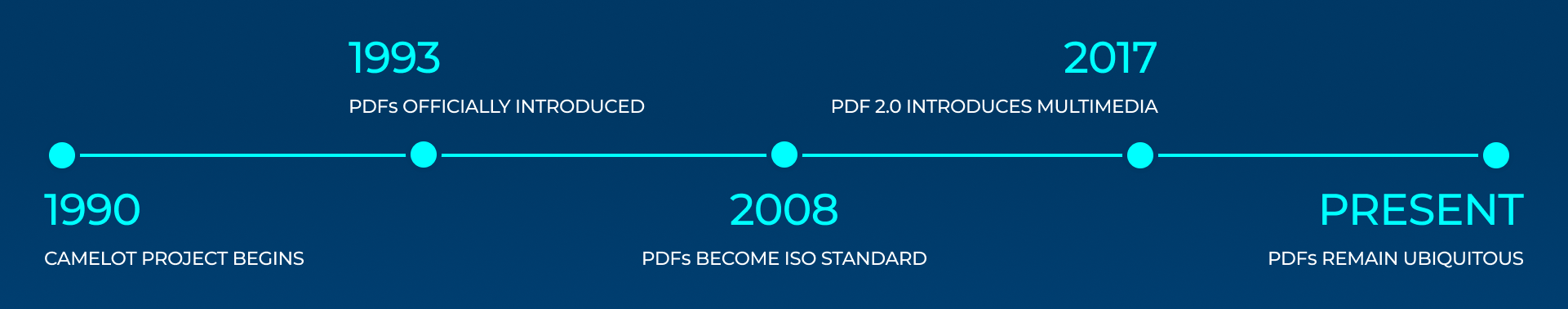

It was this challenge that Adobe co-founder John Warnock wanted to solve when he embarked on his ambitious Camelot Project in the early 1990s.

Warnock wanted to find a way to communicate documents across a wide variety of machine configurations, operating systems and communication networks. In a paper accompanying the project, he said that if the problem could be solved then the way people work would fundamentally change.

Eventually, the Camelot Project created the Portable Document Format, or PDF. It officially launched as part of Adobe Acrobat 1.0 in 1993 and allowed any font, image or layout to be shared easily across different devices with the confidence of consistency. However, as PDFs were built with visual consistency for the human eye as their primary concern, they essentially acted as electronic pictures of information, using vector or bitmap graphics and non-plain text to recreate documents.

These methods were largely unreadable for machines as they lacked semantic structure and meant that when someone tried to extract information from a PDF and put it into another file, the information would often be littered with errors and improperly formatted. Sometimes information couldn’t be extracted at all.

How have others attempted to solve the PDF data extraction challenge?

Tagging

In the 2000s, Adobe introduced the ability to add tags to a PDF to optimise the format’s accessibility. Tags made it easier for machines to identify content elements like paragraphs, headings, tables or images. They also provided logical structure and hierarchy to the content, meaning machines could more easily understand the flow of information.

Tagging was particularly useful for user-assistive technology, such as screen readers or braille displays, as well as in the world of archiving as the newly introduced metadata elements made PDFs more searchable and easier to retrieve.

Tagging often needed a human editor to go through the PDF and manually tag each element, however. It demanded a lot of time and expertise from a human, especially when the tagging was done to meet specific accessibility standards. As a result, its adoption was never widespread.

Optical Character Recognition

Optical Character Recognition, or OCR, is another popular way of converting images of text into something readable for machines. It’s been around since the 1970s and was initially built to help the blind and visually impaired to read. It worked by using the patterns of light and dark in an image, matching them against the shape of letters, and then converting this information into machine-readable text.

The technology is far from perfect, often struggling with complex formats, poor-quality images or unusual patterns. It has meant that humans have had to be involved to make sure the information pulled from a document using OCR makes sense.

Large Language Models

In the last decade, large language models (LLMs) have emerged as powerful tools for turning PDFs into machine-readable documents. These models are trained on massive amounts of data and eventually become incredibly proficient at recognising and predicting patterns within the data fed to them. This pattern recognition makes them useful in accurately ‘understanding’ what we as people can see on a PDF document. The models can convert this into something that is retrievable, searchable and understandable for machines.

How the Managed Smart Data Platform changes the game for PDF data extraction

Around 2.5 trillion PDFs are created every year — that’s a lot of data that could end up locked away from use because businesses don’t have access to accurate, efficient data extraction tools.

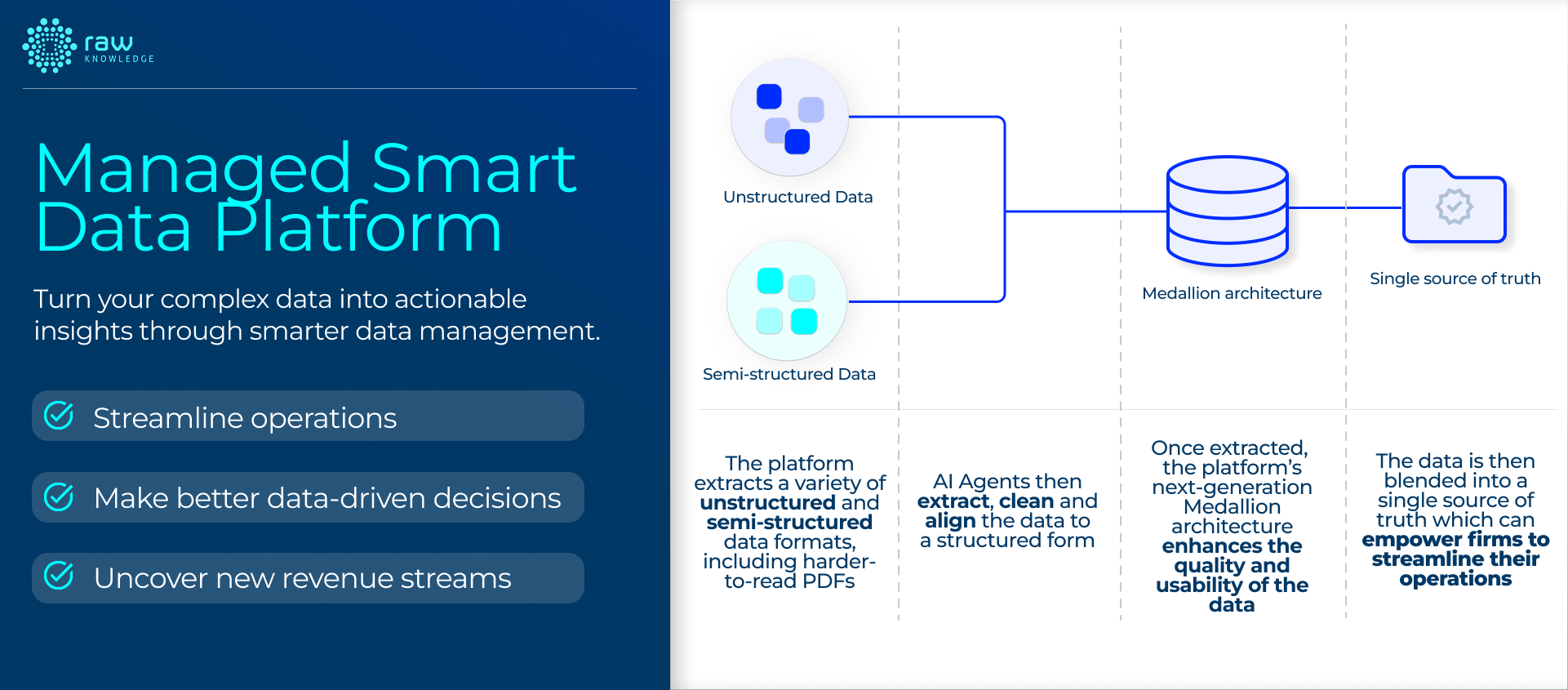

The Managed Smart Data Platform allows data insights to be gathered from harder-to-read documents, like PDFs or scanned documents, thanks to its AI-powered data extraction, ingestion and validation capabilities. So, how does it work?

Users can upload their PDF or scanned document directly to our Managed Smart Data Platform. It will then use multiple methods and a variety of parsing tools to pull the raw data from the document and place what it extracts into a data frame, a 2-dimensional table that acts much like a spreadsheet.

This data frame will then be put through large language models that are guided by tailored prompts to retrieve the data and make sense of it for onward consumption. Each method will create a readable, formatted table filled with the data that the Managed Smart Data platform will assign a confidence score based on flexible and configurable parameters.

The higher the score, the greater confidence our platform has in the accuracy of the table’s contents. Users will then be able to assess each table, modify anything that doesn’t look right or make any necessary additions to enrich the data. All this will be tracked by the platform to ensure the transparency and integrity of the data.

Once a user has approved their presented data, it progresses into the Managed Smart Data Platform’s Medallion architecture for further refinement and validation. Here, it can be combined with other data sources to add extra value and provide further insight. Businesses can then extract any combination of data elements in whatever format they require. This can be done no matter the platform, application or end-use case an organisation has in mind.

By having access to accessible, accurate and flexible data, businesses are then able to make better, data-driven decisions with confidence. Further, with their staff freed from manual data extraction, they are also able to focus on the higher-value tasks that drive their business forward.